Navigating the 2026 EU-US AI Rights Gap: What Consumers Need to Know

The enactment of the EU AI Act and the subsequent rise in the number of US states passing laws, ranging from California to Connecticut, as of August 2, 2026, has led to a “Brussels Effect” that will change the face of global software. But how do these regulations appear to most people? Navigating the EU-US AI Rights Gap of 2026: The Guide for Consumers highlights the reality of your personal data as something heavily regulated. Whether you live on one side of the Atlantic Ocean or another, or even whether you live in one US state or another, your right to challenge an AI decision, opt out of modeling, and even know if you are speaking to a human differs dramatically.

This guide serves as your “Consumer Impact Audit.” This is not simply telling you a law is on the books, but locating for you where the “Opt-Out” buttons are hiding and teaching you to spot the “Latent Disclosures” that tell you whether an image is machine-generated. While considering the essential distinctions between the EU’s risk-oriented framework and the U.S.’s disclosure-driven piecemeal approach, you will learn how to authenticate compliance and manage your cognitive bandwidth amid increasing automation.

![]()

The Right to Know: Transparency and Synthetic Content

The first and most obvious change for any consumer who uses the EU-US AI Rights Gap: What Consumers Need to Know guide is the end of the era of the “Invisible AI.” The new 2026 guidelines mandate that at the very moment of interaction, it is no longer legal to conceal whether one is dealing with a person or with artificial intelligence.

The Mandatory AI Disclosure Notice

If you find yourself within the EU member states or those states including California and Connecticut by 2026, there will need to be an interaction notice from any chatbots or customer service tools used. This will not be some hidden link in the footer of the website. It will need to be what the article calls a “front-of-house” disclosure. As you travel through the 2026 EU-US AI Rights Gap: What Consumers Need to Know, keep your eyes peeled for “You are interacting with an AI assistant.”

Watermarking and Latent Disclosures

Have you seen more instances of the “AI-Generated” disclaimer tag on social media recently? That is because of the California AI Transparency Act and Article 50 of the European Union Artificial Intelligence Act. As you try to figure out how to navigate through the 2026 EU-US AI Rights Gap: What Consumers Should Know, always check for “manifest” (visible) and “latent” (hidden within metadata in the file) disclosures. With their help, you can confirm the authenticity of the images and videos.

AI Literacy as a Protected Right

The European Union Artificial Intelligence Act has come up with a very interesting notion – that of the “right to AI literacy”. This implies that developers are motivated to offer transparent and intelligible instructions on how their software operates. While trying to navigate through the 2026 EU-US AI Rights Gap: What Consumers Need to Know, you are likely to encounter more “how this works” icons. They describe the rationale behind a recommendation or an automated output.

High-Risk Labeling and Accountability

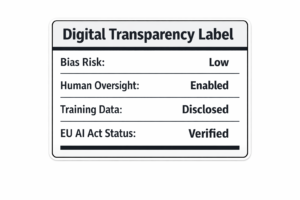

AI isn’t all built the same. When determining the key issue in 2026’s EU-US AI Rights Gap: What Consumers Need to Know, one should look no further than “High-Risk” AI systems that can be applied within the realm of education, employment, or health care services. Within the EU, these systems have to be listed in an open-access directory. If AI technology is being used to determine the terms of your mortgage or evaluate your employment application, you are entitled to ask for a “conformity assessment” of the tool used on you.

The Training Opt-Out: Reclaiming Your Data

The most important part of “Consumer Impact Audit” is related to your data. For many years, your photos and emails have been used for training without your permission. How To Navigate the 2026 EU-US AI Rights Gap? Consumer Guidance You Must Have means learning how to take your data out of the training set.

The California Training Data Transparency Law

Since January 2026, under California’s AB 2013, AI creators are mandated to reveal what kind of data was fed into the AI’s algorithm during its development phase. While exploring the 2026 EU-US AI Rights Gap: What Consumers Need to Know, citizens are now able to see whether their personal data or copyrighted materials were incorporated into that particular AI’s data set. As such, this will make it easier to request a data removal, as you won’t have to speculate which corporation “harvested” your social media accounts.

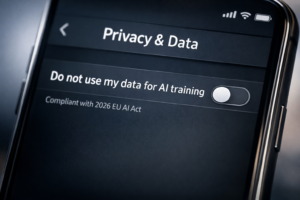

Mastering the Privacy Dashboard

All leading artificial intelligence companies such as Google and OpenAI now include a “Data Controls” option within their settings menu. As you explore The 2026 EU-US AI Rights Gap: What Consumers Need to Know, your immediate step is to switch off the option “Improve the model for everyone”. With the EU AI Act and many US state privacy laws, switching off this feature means that your interactions cannot be used in the development of new versions of the software.

Right to Object and the GDPR Link

In Europe, the AI Act is coupled with the GDPR. In terms of the 2026 EU-US AI Rights Gap: What Consumers Need to Know, one’s ability to object to the use of AI for training can be done using their “Right to Object.” This means filling out a basic form which can be found on the social media site used, such as Facebook or LinkedIn. The assertion of this right ensures that your data stops being processed under the guise of “research and development.”

Verifying “Compliant” Tools

Is there any way to be sure that a new application follows all these guidelines? While exploring the 2026 EU-US AI Rights Gap: What Consumers Need to Know, users must pay attention to either a “Compliance Badge” or a “Conformity Assessment” hyperlink. The majority of applications created by reputable providers in 2026 have no problem displaying their conformity badge proudly. However, the absence of a privacy notice with references to the EU AI Act or the Colorado SB 205 indicates that users’ data is not considered valuable.

Algorithmic Discrimination: Your Right to Recourse

The “Invisible Gap” frequently appears in the treatment we receive from algorithms. Navigating the 2026 EU-US AI Rights Gap: What Consumers Need to Know discusses the emerging rights protection against discrimination and automated “Consequential Decisions.”

The Colorado and Connecticut Protections

State legislatures such as Colorado and Connecticut have enacted legislation to address “algorithmic discrimination” (the regulation coming into effect in 2026). In your journey through the 2026 EU-US AI Rights Gap: What Consumers Need to Know, you need to be aware of the fact that if you are refused employment or housing because of AI, then you have a right to receive an explanation for this denial. You are no longer “ghosted” by the algorithm – you have a right to know who the “deployer” of the AI is.

Challenging “High-Risk” Decisions in the EU

The EU AI Act takes things a step further by creating a right to file a complaint with a national supervisory body. While traversing the 2026 EU-US AI Rights Gap: What Consumers Should Know, if one feels that any AI has infringed upon his basic human rights, then he can ask for an inquiry to be initiated into the matter. This is an important step as the onus of proof is now on the tech company to prove that their software is not discriminatory.

The Rise of “Human Oversight”

The requirement for a “Human-in-the-Loop” is one of the most critical features of surviving 2026 EU-US AI Rights Gap: What Consumers Need to Know. In cases of high-risk decisions, the AI needs to be overridden by a human. After being told that you have been rejected automatically, you need to ask yourself, “Who is the human supervisor of this machine?” The “computer says so” is no longer a legally defensible statement for a firm.

Understanding Prohibited Practices

In the 2026 EU legal framework, some AI applications that remain gray areas in the US are completely prohibited. In the 2026 EU-US AI Rights Gap: What Consumers Need to Know, remember that the EU does not allow the use of “Social Scoring” (a credit score based on your personality) or “Emotion Recognition” in the workplace. Being aware of these prohibitions will help you recognize when an application made in another country is requesting access to features that are prohibited in your country.

The Global “Verification” Audit: Is Your Tool Compliant?

The last phase for dealing with the “AI rights gap” between EU-US in 2026 is becoming a “Compliant Consumer.” As early as 2026, we need to conduct an audit on ourselves as regards our own technology to ensure compliance with new digital security guidelines.

Checking for machine-readable indicators

The future of the year 2026 means that content is not only classified by human beings, but content will also be classified by machines. In moving around the EU-US AI rights gap of the year 2026: what consumers need to know, a consumer who knows his way around will first ensure that the content that he uses has metadata referred to as “C2PA” (Coalition for Content Provenance and Authenticity).

The Role of the European AI Office

If you go for international AI tools, then do bear in mind that there exists a global body in charge of overseeing the field in question, that being the European AI Office. When trying to navigate through the 2026 EU-US AI Rights Gap: What Consumers Need to Know, you may want to check the official portal of the agency and check out its “Red List,” listing AI models that are non-compliant with standards and therefore not suitable for further use.

US State “Cure Periods” and Your Rights

There are several acts in the United States that contain the “cure period” when the firms have an opportunity to fix any violations without being charged. In case you face a problem in your AI device, like a bug or a breach of privacy, the report of such violation to the state Attorney General can activate this “cure period.” Your active consumerism will contribute to creating a more secure AI environment.

The Shift to “On-Device” AI

One of the key trends for 2026 is “On-Device” AI to get out of the compliance headache. In case of trying to deal with the problem of the 2026 EU-US AI Rights Gap: What Consumers Need to Know, the use of technologies for processing information directly on your phone or computer (not through cloud services) would be the best way to protect yourself. The thing is that most such applications do not fit the “Data Processing” definition due to no information leaving your devices.

Frequently Asked Questions (FAQ)

Frequently Asked Questions (FAQ)

What is the 2026 EU-US AI Rights Gap?

This concept explains the disparity in consumer rights between the broad-based Artificial Intelligence Act of the EU (implemented August 2026) and the sectoral approach of the USA. While the EU emphasizes the risk-based model, the USA is more inclined towards transparency and anti-discrimination.

How can I tell if an image is AI-generated in 2026?

When exploring the EU-US AI Rights Gap in 2026: What the Consumer Must Understand, pay attention to labeling (“AI-Generated”), and examine the file’s metadata for hidden C2PA claims. The vast majority of large social media sites will now flag any posts that include such tags.

Can I opt-out of AI training?

Absolutely. By 2026, any platform must have an option to opt out. You may find that option under “Privacy Settings” or “Data Controls.” Disabling “Improve the model for everyone” is the simplest way to prevent the use of your data for training future iterations of AI.

What happens if an AI discriminates against me?

Based on where you are located, you have the right to receive an explanation and human review. In places such as Colorado or the EU AI Act, businesses will be required to demonstrate that their systems, which they know are high risk, are not discriminatory.

Are all AI tools in 2026 safe?

Safety is relative. The new regulations have eliminated “unacceptable” risk factors such as social credit score systems in the EU; however, one must always be careful. Ensure that the tool you use is “Compliant” and always prefer on-device processing of AI when handling private data.

Final Conclusion: The Empowered Citizen in the Age of AI

With the coming of August 2026, there will be a significant shift in the dynamic of humanity and machines. We have gone beyond the time when things were done with the philosophy of “move fast and break things” and have come into the age of “move cautiously and record everything.” The need for understanding “Navigating the 2026 EU-US AI Rights Gap: What Consumers Need to Know” is no longer exclusive to tech lovers but a vital aspect of survival.

Through your knowledge of opt-out rights, your right to an explanation, and the need for transparency labels, you are no longer merely a data point but have become an active player in the AI economy. This legislation marks the beginning of a new social contract. Tonight, as you navigate your privacy controls, consider the fact that each “No” ticked represents a vote for a world where technology works for us rather than against us.